When talking to people about Microsoft Azure one of the biggest concerns is cost management and how to avoid runaway spend. In this post I’ll share my tips for optimising Azure expenditure. In my organisation I’ve used these tips to reduce Azure expenditure by around 50%.

Right size CPU

If you use the Azure portal to create a Windows Server 2016 VM, the default size as of Nov 18 is a DS1 v2 which costs £72.36 a month in West Europe. If you don’t take the time to check what’s the most appropriate VM series and size you could be paying more than you need to, or under sizing your VM. If you’re running a low end production server, or dev/test server, you could use a B series VM such as a B2S, which costs £35.26 a month. In this case you’ve instantly saved ~50%.

Information on VM sizes is available here. If you’re sizing for a workload that requires high storage throughput take care to check the disk IOPs and throughput measurements. You don’t want to under size your VM, but you don’t want to over pay either.

When sizing for high CPU performance check the compute benchmark scores as the CPU performance varies between VM series.

If you want to resize a VM, Azure Automation is a great way, see here for example code in PowerShell.

Right size disk

If you use the Azure portal to create a Windows Server 2016 VM, the default disk type is premium SSD. A 128 GB premium SSD disk is £16.16 a month. If you don’t require a single instance SLA, or high performance, you could use a standard SSD disk at £7.15 a month + transaction costs. Again, this is a ~50% saving.

Information on disk type is available here. If you chose to use premium storage for performance reason be sure your workload can utilise the disk fully. Information on how to monitor this is available here.

Managed disks allow you to switch between the disk types, e.g. standard HDD, standard SSD and premium SSD. You can use Azure Automation to automate the change, see here for example code.

Right time

Once you have the right VM and disk types think about when you need to run the VM. If you have a dev/test workload you could use automation to only run the VM during the working day, 08:00-18:00. By running the VM for 10 hours a day rather than 24 you can save significantly on the compute costs. Note that you continue pay storage costs when the VM is turned off.

From the VM blade in the Azure portal you can configure auto-shutdown to turn of the VM at a set time each day. For more advanced start/stop scenarios look for PowerShell solutions such as this.

Reserved Instances

Reserved Virtual Machine Instances enable you to make significant savings on compute costs. The saving varies by VM series and the duration of the reservation, but 1 year reservation for a D2s v3 VM is 36%, and for a F2s v2 it’s 24%, both significant savings.

Clean up

Don’t waste money on resources you no longer need. As mentioned earlier, even if you turn off a VM you still pay storage costs. If you delete a VM the disk is left behind, again costing money. Azure Automation is a great way to identify and remove unattached disks, see here for example code. Don’t forget to look for other resources that are no longer used, e.g. unattached public IP addresses.

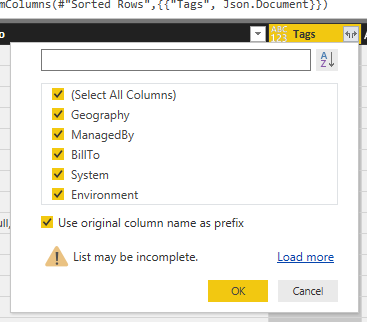

Tagging

When you create a resource group, add appropriate tags to enable you to identify the system, owner and role for the resources contained with it. Use automation to cascade the tags to all resources within the group; example code is available here. You can then use Power BI as I describe here to create detailed reports on usage by tag, resource type etc. Combining tagging with Power BI is a very powerful combination.